Most MVPs do not fail because of bad engineering.

They fail because of decisions made before development begins.

In early product discussions, pressure builds quickly. Stakeholders want momentum. Teams want visible progress. AI tools make execution feel faster and more accessible than ever.

Speed is no longer the constraint.

Clarity is.

CB Insights’ widely cited analysis of startup failures found that the most common reason startups fail is building something the market does not need, not technical execution issues¹. Failure rarely begins in code. It begins in misdiagnosed problems, undefined scope, and assumptions that were never tested.

By the time those assumptions surface, capital and credibility have already been spent.

The damage was done before a single line of code mattered.

The most expensive mistakes rarely originate in engineering. They originate in ambiguity.

Common early-stage failures include:

Committing to an unclear job-to-be-done

Expanding scope to accommodate competing stakeholders

Selecting architecture before validating constraints

Applying AI because it is available, not because it is necessary

Defining success after launch instead of before build

These risks align with principles introduced in The Lean Startup, which emphasizes validated learning over feature accumulation².

An MVP is not a smaller version of the final product.

It is a focused experiment designed to generate signal.

When teams treat MVPs as mini-products instead of learning instruments, they inflate scope and dilute insight.

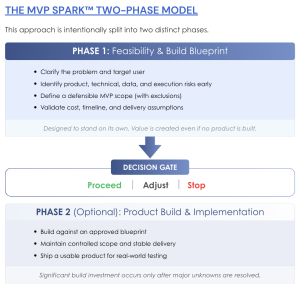

At Acuvity, we formalized this discipline through MVP Spark™, a feasibility-first model for product decision-making.

The model is intentionally structured in two phases.

Clarify the primary user and core workflow

Surface product, technical, data, AI, and execution risks

Define Must, Defer, and Exclude boundaries

Establish explicit exclusions

Define what success actually looks like

This phase is designed to stand on its own. Value is created even if no product is built.

Execution begins only after scope, architecture, risks, and success criteria are deliberately defined and agreed.

This two-phase decision gate structure is outlined in the MVP Spark™ Playbook.

Build investment is earned, not assumed.

AI has dramatically reduced the cost of experimentation and scaffolding.

McKinsey’s 2023 report on generative AI highlights significant productivity gains in software development, while emphasizing the importance of governance, architecture discipline, and risk controls³.

The danger is not AI complexity.

The danger is accelerating the wrong direction with greater confidence.

In a feasibility-led system, AI is applied selectively:

At one or two deliberate leverage points within a core workflow

With clearly defined inputs and outputs

With guardrails around data usage and oversight

This philosophy aligns with responsible AI governance frameworks from organizations such as NIST and OECD⁴.

AI multiplies intent.

Multiplying ambiguity is not progress.

Scope creep is rarely malicious. It emerges from good intentions such as additional stakeholder requests, edge cases, or seemingly harmless feature additions.

Left unchecked, those additions dilute signal and inflate cost.

The MVP Spark™ Must, Defer, and Exclude framework, detailed in the Playbook, creates shared language for holding the line on scope.

Explicit exclusions are not limitations.

They are protections.

Behavioral economics research on sunk cost bias shows that once organizations commit resources, they struggle to stop investing even when evidence weakens⁵.

Tight scope boundaries reduce that bias before it takes hold.

In a feasibility-first model, launch is treated as a controlled experiment.

The objective is not visibility.

It is evidence.

Evidence is defined during feasibility, not retrofitted after launch. Typical signals include:

Adoption within the core workflow

Measurable effort reduction

Repeat engagement

Where users hesitate, abandon, or workaround

After launch, there are only three rational paths:

Scale

Iterate

Stop

Stopping is not failure.

It is disciplined capital allocation based on evidence rather than optimism.

This decision-gate discipline is central to MVP Spark™.

Speed is valuable in competitive markets.

But speed without clarity produces rework.

In our broader growth framework, Market Navigator™, we emphasize strategic sequencing so opportunity selection precedes aggressive execution.

Similarly, organizations evaluating AI-driven initiatives should consider governance and readiness alongside ambition, principles addressed in AI Compass™.

Feasibility compresses uncertainty early, where decisions are cheaper and clearer.

The goal is not to build faster.

It is to learn faster.

At Acuvity Consulting, a California boutique management consulting firm and Big 4 alternative, this feasibility-first approach reflects how we work across strategy and technology engagements. As a minority-owned consulting firm based in California, we combine Big 4 experience with boutique-level focus and execution discipline.

Many mid-market and growth-stage organizations seeking alternatives to Deloitte or Bain in California are not looking for scale alone. They are looking for clarity, senior-level involvement, and structured decision frameworks that align capital, technology, and market opportunity. Our work across healthcare, manufacturing, and technology sectors reflects that principle.

Feasibility-first thinking is not a product tactic. It is a capital discipline embedded in how modern boutique consulting firms operate when strategy and technology must align.

Not every idea should be built.

The right ones should be built with discipline.

Clarity before code is not hesitation.

It is responsible momentum.

MVP Spark™ exists for leaders who want confidence before committing capital and credibility, especially when evaluating AI-driven or technology-enabled initiatives.

If you are exploring a new product concept, the most responsible first step is not development.

It is structured feasibility.

Learn more about the MVP Spark™ framework here.

Or schedule a focused working session:

https://calendly.com/venkatavasarala

¹ CB Insights, “The Top 12 Reasons Startups Fail.”

https://www.cbinsights.com/research/startup-failure-reasons-top/

² Eric Ries, The Lean Startup, Crown Business (2011).

³ McKinsey & Company, “The Economic Potential of Generative AI,” 2023.

https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-economic-potential-of-generative-ai

⁴ NIST AI Risk Management Framework, 2023.

https://www.nist.gov/itl/ai-risk-management-framework

⁵ Kahneman, D., and Tversky, A., “Prospect Theory: An Analysis of Decision Under Risk,” Econometrica (1979).

Venkat Avasarala Apr 24, 2026

Venkat Avasarala Mar 20, 2026

Venkat Avasarala Feb 26, 2026